Executive Summary

Tokenization, the digital representation of real-world assets and financial claims on programmable ledgers, is transitioning from experimental pilots to early institutional adoption. Its significance lies not in replacing existing financial systems, but in rewiring how ownership, settlement, and collateralization function across capital markets. By compressing settlement cycles, enabling fractional ownership, and automating lifecycle events, tokenization has the potential to unlock trapped liquidity, reduce transaction and reconciliation costs, and improve capital allocation efficiency across borders. Crucially, its long-term economic impact will be determined less by the underlying technology and more by legal enforceability, regulatory clarity, and institutional trust.

From a global economic perspective, tokenization sits at the intersection of capital markets modernization, cross-border finance, and monetary infrastructure reform. Global financial assets run into hundreds of trillions of dollars, with large pools of capital remaining structurally illiquid or inefficiently intermediated, most notably the approximately USD 390 trillion global real estate market and the USD 15+ trillion private markets sector.

At the same time, core market infrastructure remains costly and slow i.e. settlement and post-trade processes are estimated to cost USD 17–24 billion annually with standard settlement cycles still operate on a T+2/T+3 basis. Against this backdrop, asset managers, banks, and market infrastructure providers are leading tokenization adoption in low-risk, short-duration instruments such as money market funds, bonds, and deposits, where operational efficiencies can be realized without introducing systemic instability.

The emerging trajectory points to a phased transformation. Tokenization is moving from proof-of-concept toward selective production use in wholesale and institutional markets, supported by pilots and live issuances from global asset managers and tier-1 banks. Broader retail participation, if it emerges, is likely to follow only after legal frameworks, custody standards, and supervisory regimes mature. In this sense, tokenization should be understood not as a disruptive replacement of the financial system, but as an incremental yet structurally important upgrade to global financial infrastructure.

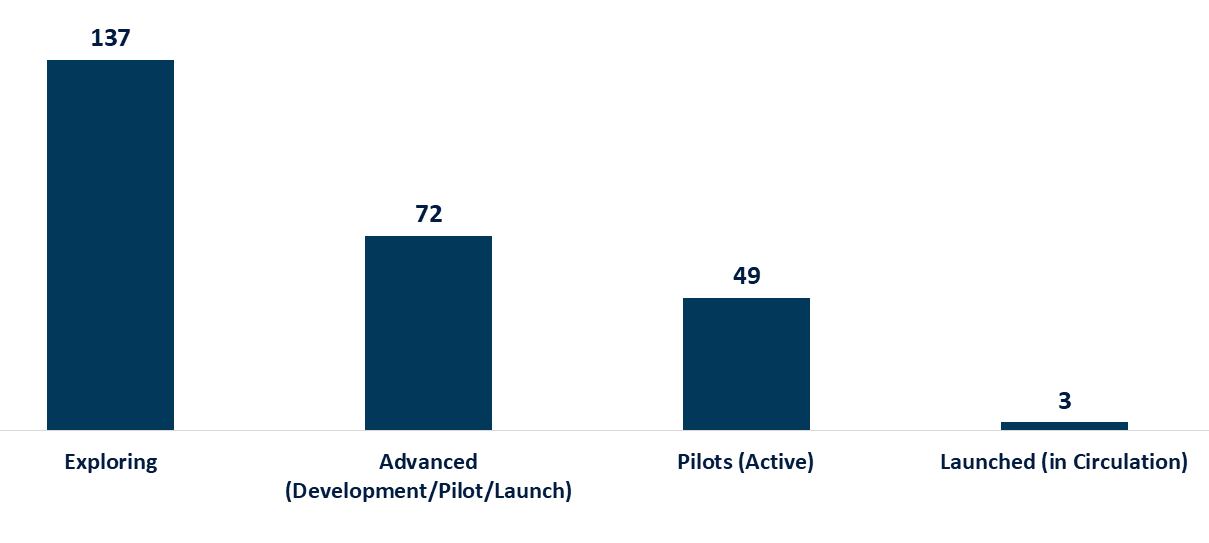

CBDC Activity by Stage (Countries & Currency Unions)

Tokenization as Market Infrastructure: Definition, Design, and Value Creation

Tokenization is the process of generating and recording a digital representation of traditional assets or financial claims on distributed ledger technology (DLT) or other programmable ledgers. Importantly, a token is not ownership in itself; it is a digital claim whose legal meaning depends entirely on off-chain arrangements. Legal title, economic rights, and investor protections arise only when contracts, ownership structures, and regulatory approvals explicitly map those rights to the token.

As a result, tokenization operates through two inseparable layers: a legal layer, comprising SPVs or trusts, contractual rights, custody, title transfer, and regulatory compliance; and a technical layer, encompassing token issuance, smart contracts, wallets, validators, and immutable ledger records. Empirical evidence from early tokenized offerings indicates that investor losses have overwhelmingly stemmed from weak legal structuring or governance failures rather than from deficiencies in blockchain technology itself.

When these two layers are coherently designed, tokenization can create economic value across multiple channels of the capital markets value chain. At the asset level, fractionalization enables fine-grained ownership of traditionally illiquid assets such as commercial real estate, private equity, and infrastructure, lowering minimum investment thresholds and expanding the effective investor base. This can compress liquidity premia and reduce sponsors’ cost of capital, particularly when compliant secondary trading mechanisms exist.

At the market-infrastructure level, tokenization enables atomic settlement and delivery-versus-payment (DvP), allowing transactions to settle near-instantly rather than through multi-day batch processes. Given that post-trade and settlement functions are estimated to cost global capital markets USD 17–24 billion annually, even incremental efficiency gains can translate into material economic savings while reducing counterparty and intraday liquidity risk in wholesale markets.

Beyond ownership and settlement, tokenization introduces a programmable layer to collateral and cross-border finance. Tokenized assets can be reused as collateral in near real time, enabling dynamic margining, automated substitution, and more precise liquidity management for market makers, prime brokers, and corporate treasuries. At the same time, tokenized wholesale money whether in the form of tokenized bank deposits or wholesale central bank digital currency (CBDC) has demonstrated the potential to shorten cross-border settlement chains and reduce correspondent banking frictions through payment-versus-payment (PvP) and delivery-versus-payment mechanisms. However, these efficiencies also introduce new transmission channels for financial stress, reinforcing the need for robust governance, access controls, and regulatory oversight. In this sense, tokenization should be viewed not as a standalone innovation, but as a re-engineering of market infrastructure whose benefits and risks scale together.

Market Potential and Early Adoption: Where Tokenization Is Scaling and Where It Isn’t

Assessing the economic potential of tokenization requires an asset-class–by-asset-class view, rather than top-down headline estimates. The largest addressable pools sit in asset classes that are both economically significant and structurally illiquid. Global real estate, across residential and commercial segments, represents a multi-hundred-trillion-dollar stock of assets, where tokenization can unlock incremental liquidity through fractional ownership and secondary trading. However, the realistic impact over the next decade is likely to be measured relative to existing listed vehicles, such as REITs, rather than the full property universe.

Private markets, including private equity and private credit, present a similar dynamic where tokenization can lower minimum investment sizes and expand participation among qualified investors, but transfer restrictions, valuation opacity, and investor-protection rules significantly limit near-term retail scalability. By contrast, short-duration, cash-like instruments such as money market funds, treasury bills, and commercial paper exhibit a much clearer product–market fit due to standardized cash flows, frequent issuance, and institutional demand for efficient settlement and collateral use.

Tokenized money market and treasury funds launched by global asset managers have demonstrated institutional appetite for blockchain-native representations of low-risk assets, particularly where tokens can be used as on-chain collateral or settlement assets. In parallel, tier-1 banks are developing tokenized commercial deposit frameworks, positioning on-chain deposits as regulated liabilities that combine the programmability of tokens with the credit backing and compliance standards of the banking system.

At the infrastructure level, wholesale central bank digital currency (CBDC) and cross-border settlement pilots such as Project Jura have validated atomic settlement of tokenized securities against central bank money, materially reducing settlement risk through delivery-versus-payment (DvP) and payment-versus-payment (PvP) mechanisms. While these pilots are narrow in scope, they demonstrate that tokenization can operate at scale where legal finality, custody, and counterparty credit are clearly defined.

Taken together, these developments point to a multi-speed adoption curve rather than a single addressable market. Tokenization is scaling first in wholesale and institutional contexts (banks, asset managers, and regulated market infrastructure) where operational efficiencies can be realized without expanding systemic risk. Broader participation, including institutional retail and eventually mass retail, remains contingent on legal clarity around transferability, standardized custody frameworks, and supervisory comfort with secondary markets.

Policy Imperatives

Tokenization challenges existing regulatory, legal, and supervisory frameworks not because it creates new financial risks, but because it repackages familiar risks in faster, more automated, and more interconnected forms. Policymakers are therefore increasingly converging on a “same activity, same risk, same regulation” principle, while recognizing that tokenized markets introduce new operational and governance considerations. Our study emphasizes that legal certainty of ownership, settlement finality, custody, and insolvency treatment must precede any large-scale expansion of tokenized markets. Without this foundation, efficiency gains risk being offset by confidence shocks and fragmented adoption across jurisdictions.

From a strategic standpoint, regulators and governments face a sequencing challenge: enabling innovation without prematurely opening retail access or creating parallel market infrastructures that undermine financial stability. The most credible path forward is a graduated approach, prioritizing wholesale and institutional use cases, embedding tokenization within existing regulatory perimeters, and advancing interoperability standards across borders.

Policy and Strategy Priorities

-

-

- Establish legal clarity first: Explicit legal recognition of tokenized claims, including enforceability, insolvency treatment, and investor rights, should precede broad market rollout.

- Focus on wholesale adoption: Banks, asset managers, and market infrastructures should remain the primary testbed, where risks are contained and governance is strong.

- Promote interoperability, not fragmentation: Cross-border standards for custody, settlement, and compliance are critical to avoid siloed tokenized markets that limit scalability.

-

Conclusion

Tokenization is best understood as a structural modernization of financial market infrastructure, not a parallel system competing with existing capital markets. Its early traction in wholesale settlement, short-duration instruments, and institutional asset management reflects a simple reality: the economic benefits of tokenization materialize fastest where legal certainty, standardized assets, and regulated counterparties already exist. In these settings, tokenization delivers tangible improvements in settlement efficiency, collateral mobility, and operational resilience without introducing new sources of systemic risk.

Over time, the relevance of tokenization will be determined less by technological progress than by policy alignment, market discipline, and governance credibility. As legal frameworks mature and interoperability improves, tokenization may gradually extend into more complex and illiquid asset classes, reshaping how capital is pooled, deployed, and recycled across borders.